Like many (if not most) gamers, I started out gaming on a console. The console power of even the earliest home gaming systems paled in comparison to what an equivalent PC was capable of, but it didn’t really matter then. I grew up playing the Nintendo Entertainment System which was quite a while before the days of multiplatform releases. We’re now in the day and age where the vast majority of games that come out on consoles are also coming out on PCs and it’s holding back the evolution of gaming as a whole.

Console power just isn’t equivalent to PC power on every measurable metric: graphics, RAM, processor power, and even hard drive space. In effect, this creates an artificial upper limit that I imagine quite a few game developers are unwilling to cross lest they risk losing the possibility of a profitable multiplatform release.

Despite their shortcomings, consoles have been an amazing game changer for the industry in a lot of ways. It effectively democratized gaming and made it easy for a family to drop a few hundred bucks, plug in their machine, and get right to playing. Any PC gamer worth their salt can tell you stories of frustrating driver errors or the need to edit an .ini file. However, those downsides pale in comparison to the innumerable advantages that PC gaming has in sheer versatility.

CD Projekt’s console crusade

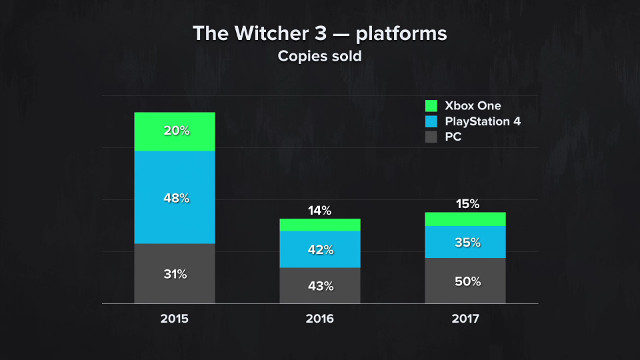

As an example, let’s look at some sales data for The Witcher 3 sourced from CD Projekt Group’s financial results conference for 2017. While PC certainly pulled in some respectable numbers, the Xbox One and PS4 versions of the game combined made up for the majority of the game’s sales in 2015 and 2016, only finally evening out in 2017. Should a developer wish to make a game that required more than the current console power can provide, they may very well be leaving half or more of their sales on the table. That’s a financial risk that could easily bankrupt a company.

Faced with these kinds of numbers, it raises the questions: do developers hamstring their own games in order to achieve these sales? I recall playing stuff like the original Battlefield 1942 and looking forward to the day when we might see hundreds or even thousands of players battling it out on the same server. After all, it mostly came down to a problem of having enough power to handle anything. Internet was getting faster, hard drives were getting bigger, and RAM and processors were getting speedier every few months. Every six months would mean a new graphics card which would result in some kind of crazy new tech showing up in the latest game. Sure, there was a bit of a lag time for development, but we got the newest stuff as soon as possible.

But what about now? Well, how often do we see something that is genuinely PC exclusive on the basis of computing power? Games like Star Citizen (which is very unlikely to ever be on a console) used to be the norm. Developers were pushing boundaries and exploiting the absolute upper limits of consumer-grade computing hardware.

How often does that happen today? When was the last time that you had to upgrade your PC to play an upcoming game that was a PC exclusive? Gaming can prosper under the power and versatility of PCs and do things we can’t dream of doing now. But since studios can’t forfeit consoles, we’re forced to live within the parameters of dated tech that won’t see those dreams realized as quickly. It’s hard to discount developers for wanting to stay afloat from console software sales but they also stunt the medium’s growth; a far cry from the continually moving benchmarks in the earlier days of PC gaming.

The elephant in the room

With the rare exception of companies like Cloud Imperium games, very few developers are willing to outright say that console power will never be enough for the best possible gaming experience. Even fewer developers would probably be willing to live by that statement and truly attempt to push the boundaries of gaming tech. It would make the chance of an equivalent console port a complete non-starter, which would leave an awful lot of sales on the table.

The late ’90s and early 2000s saw game developers taking advantage of the absolute most powerful tech available at the time. They didn’t need to sell millions upon millions of copies to break even. Now, games are being made with the need to run on machines that are half a decade old and counting. That’s the ceiling, now: 8GB of RAM, only so much hard drive space, only so much processing power, and only so much graphics power to push the medium forward. We’ll probably get the next generation from Sony and Microsoft in the next year or two, which will just start this silly dance all over again. At least, unless more developers are willing to forego console sales and try to take their game to the next level.